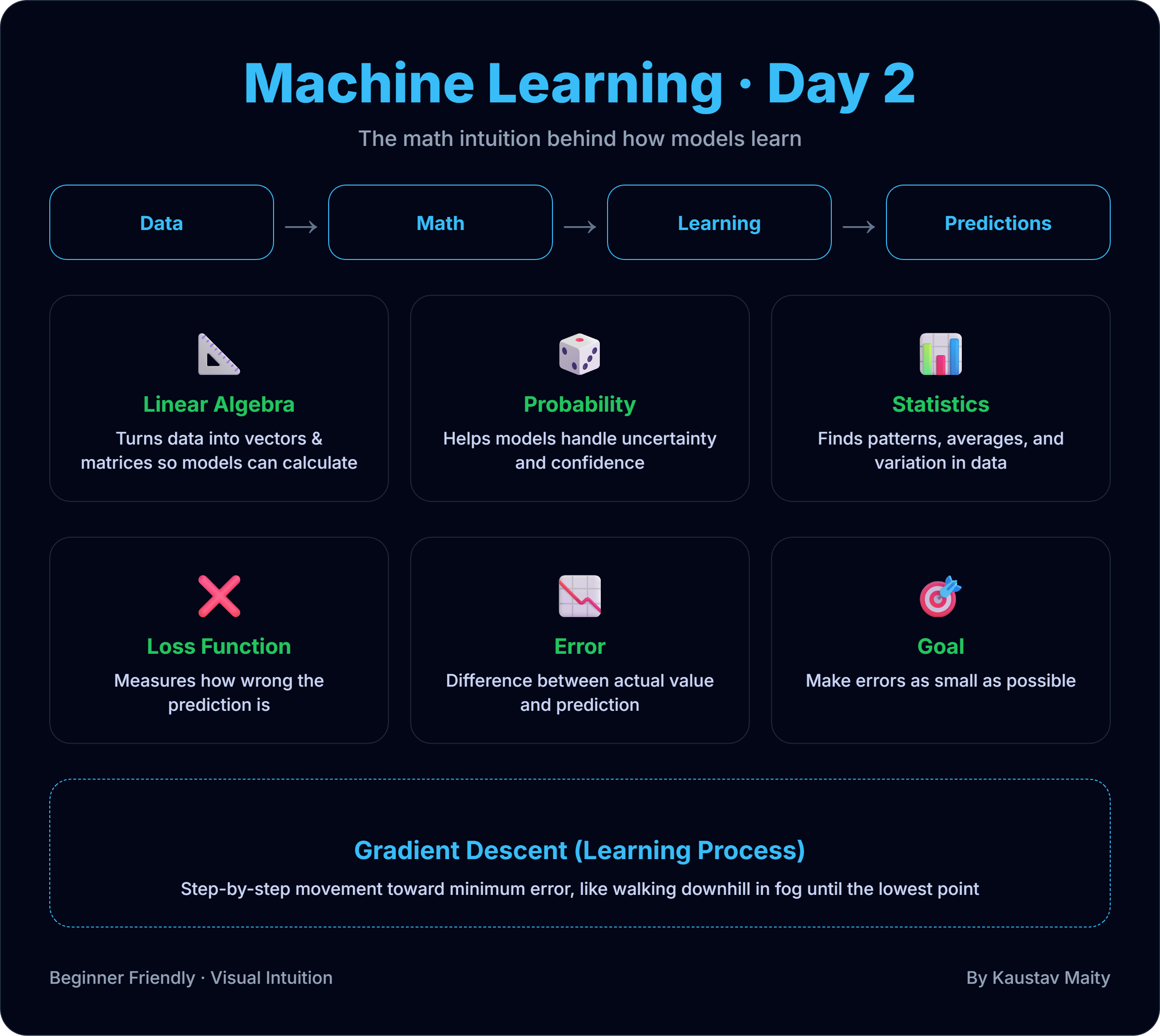

📐 Machine Learning Mathematics: The Intuition You Actually Need (Day 2)

Many people feel scared when they hear “Machine Learning Mathematics”.

But the truth is 👉 you don’t need to be a mathematician to understand ML.

You only need to understand what the math means, not memorize formulas.

This post explains the core math ideas behind machine learning in simple terms.

📌 Why Mathematics Is Important in Machine Learning

Machine learning models are not magic.

They are mathematical functions that:

- Take input data

- Perform calculations

- Produce predictions

Math helps us answer questions like:

- How does the model learn?

- How does it improve?

- How do we know if it’s wrong?

🧮 1. Linear Algebra (Data Representation)

Machine learning works with numbers, and linear algebra is how we organize them.

Simple intuition

- Single value → number

- Multiple values → vector

- Table of values → matrix

Example:

- One house → size = 900

- Many houses →

[500, 800, 1000, 1200]

📌 ML models treat datasets as matrices and perform calculations on them.

You don’t need to master matrix math now — just know:

Data in ML = vectors & matrices

🎲 2. Probability (Handling Uncertainty)

Machine learning deals with uncertainty, not guarantees.

Examples:

- Email is spam with 90% probability

- Customer may churn with 70% probability

Probability helps models answer:

- How confident is this prediction?

- How likely is an event?

📌 ML doesn’t say “YES or NO”

👉 It says “how likely”

📊 3. Statistics (Learning from Data)

Statistics helps models learn patterns from data.

Key ideas:

- Mean → average value

- Variance → how spread out data is

- Distribution → how data behaves

Example:

- Average house price in an area

- Price variation across locations

📌 Statistics helps us:

- Understand data

- Detect noise

- Measure model performance

📉 4. Loss Function (How Wrong Is the Model?)

A model learns by making mistakes.

A loss function measures:

“How wrong is the prediction?”

Example:

- Actual price = 50

- Predicted price = 45

- Error = 5

The goal of ML:

Minimize the loss

Smaller loss = better model.

🏃♂️ 5. Gradient Descent (How Models Learn)

This is the heart of machine learning learning.

Simple analogy

Imagine standing on a mountain in fog 🌫️

You want to reach the lowest point.

You:

- Take small steps

- Move downward

- Stop when you can’t go lower

That’s Gradient Descent.

📌 In ML:

- Mountain height = loss

- Direction = gradient

- Steps = learning rate

The model slowly improves by reducing errors step by step.

🧠 How All Math Fits Together

Here’s the full picture:

- Linear Algebra → handles data

- Probability → handles uncertainty

- Statistics → learns patterns

- Loss Function → measures mistakes

- Gradient Descent → fixes mistakes

Together, they allow a machine to learn from data.

⚠️ Common Beginner Mistake

❌ Trying to memorize formulas

✅ Understanding intuition

Most ML engineers:

- Use libraries (scikit-learn, TensorFlow)

- Focus on why things work

- Learn math gradually while building models

📝 Final Thoughts

You don’t need to master all math before machine learning.

Start with:

- Clear intuition

- Practical examples

- Real-world thinking

As you build models, the math will naturally make sense.

Machine learning math is not hard —

it’s just logic written with numbers.

Comments (0)

No comments yet. Be the first to share your thoughts!

Leave a Comment